AI Manga Translator: How It Works and Which Tools Are Actually Good

AI manga translation handles speech bubbles, vertical text, and layout preservation automatically. Here is how the best AI manga translator tools work — and which ones are worth using.

Translating manga isn't like translating a document. The text is inside speech bubbles, sound effects are embedded in the artwork, panels read right to left, and the translated text has to fit back into the original layout without breaking the art.

Regular machine translation handles none of this. AI manga translation tools handle all of it.

Here's what's actually happening under the hood.

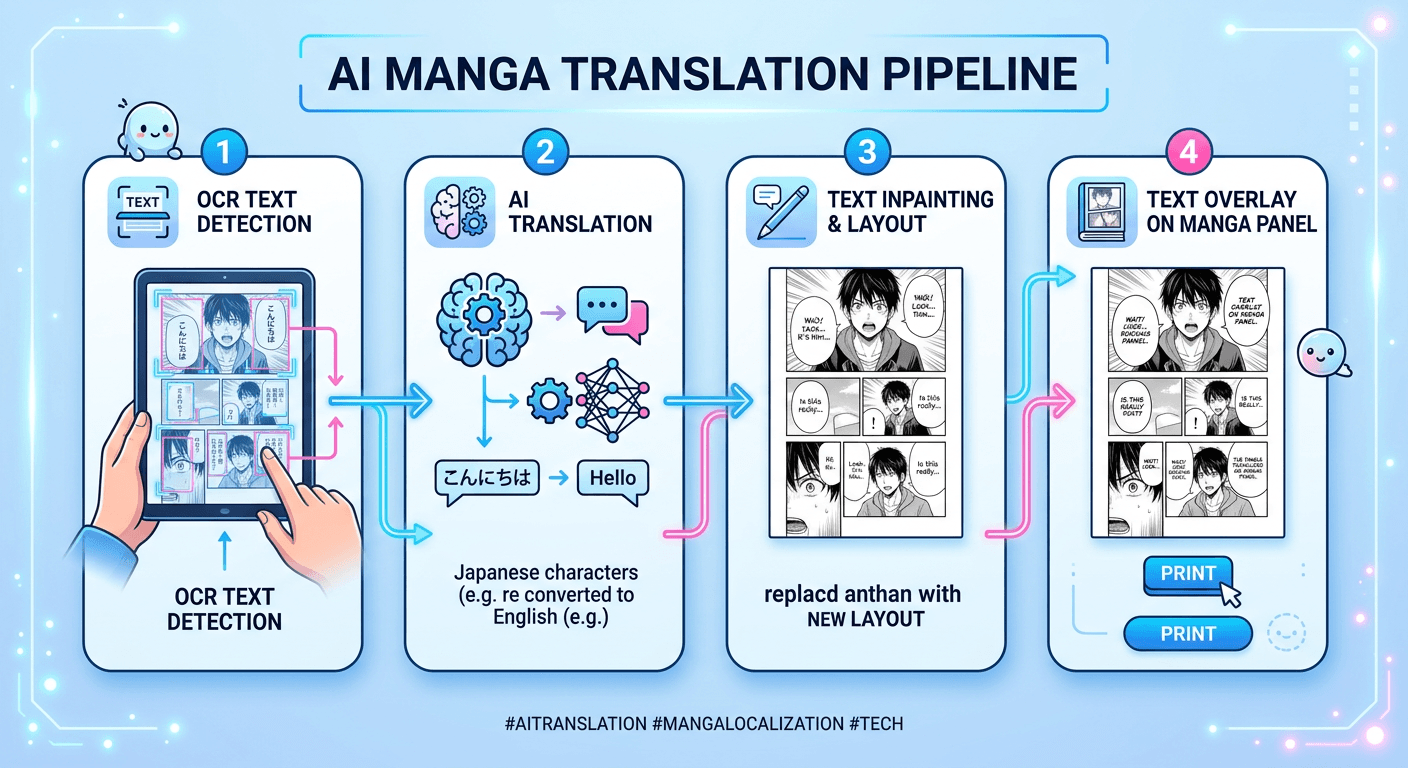

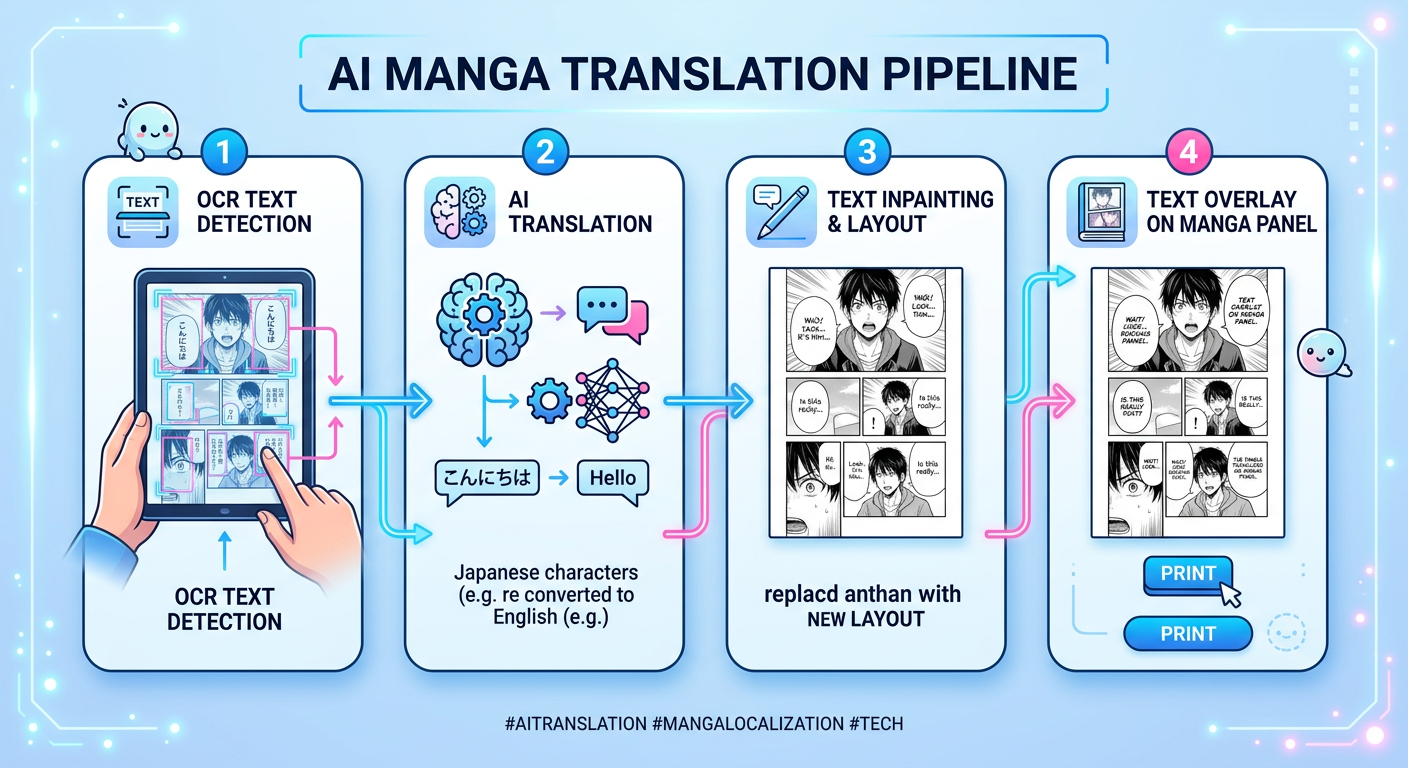

The Four Steps of AI Manga Translation

Step 1: Text Detection

The first challenge is finding text in an image. Manga uses multiple text types:

- Dialog inside speech bubbles (usually horizontal in Western manga, vertical in Japanese)

- Sound effects (SFX) drawn directly into panels in stylized fonts

- Narration boxes with story text

- Signs, labels, and background text

AI detection models are trained specifically on manga art styles to identify these regions accurately — including partially obscured text and text wrapped around artwork.

Step 2: Text Recognition (OCR)

Once text regions are found, OCR (Optical Character Recognition) reads the characters. Japanese OCR is harder than Latin script OCR because:

- Kanji has thousands of distinct characters

- Handwritten and stylized manga fonts don't match clean print fonts

- SFX often use invented or distorted characters

Modern AI models fine-tuned on manga corpora handle this significantly better than general-purpose OCR tools.

Step 3: Translation

The extracted text is passed to a translation model. This is where context matters enormously. A word like "やばい" (yabai) can mean "dangerous," "amazing," "terrible," or several other things depending on context — and a good translation model uses surrounding panels and dialog history to pick the right one.

The best manga translation tools use LLMs with context windows large enough to consider the whole chapter, not just individual text bubbles in isolation.

Step 4: Layout and Rendering

The translated text has to go back into the image:

- The original text is erased (inpainted) from the speech bubble

- The translated text is rendered in an appropriate font

- Font size is adjusted to fit the bubble without overflow

- For vertical-to-horizontal translation, the bubble shape may need to expand

This step is where many tools fail — text overflow, mismatched fonts, and visible inpainting artifacts are common issues.

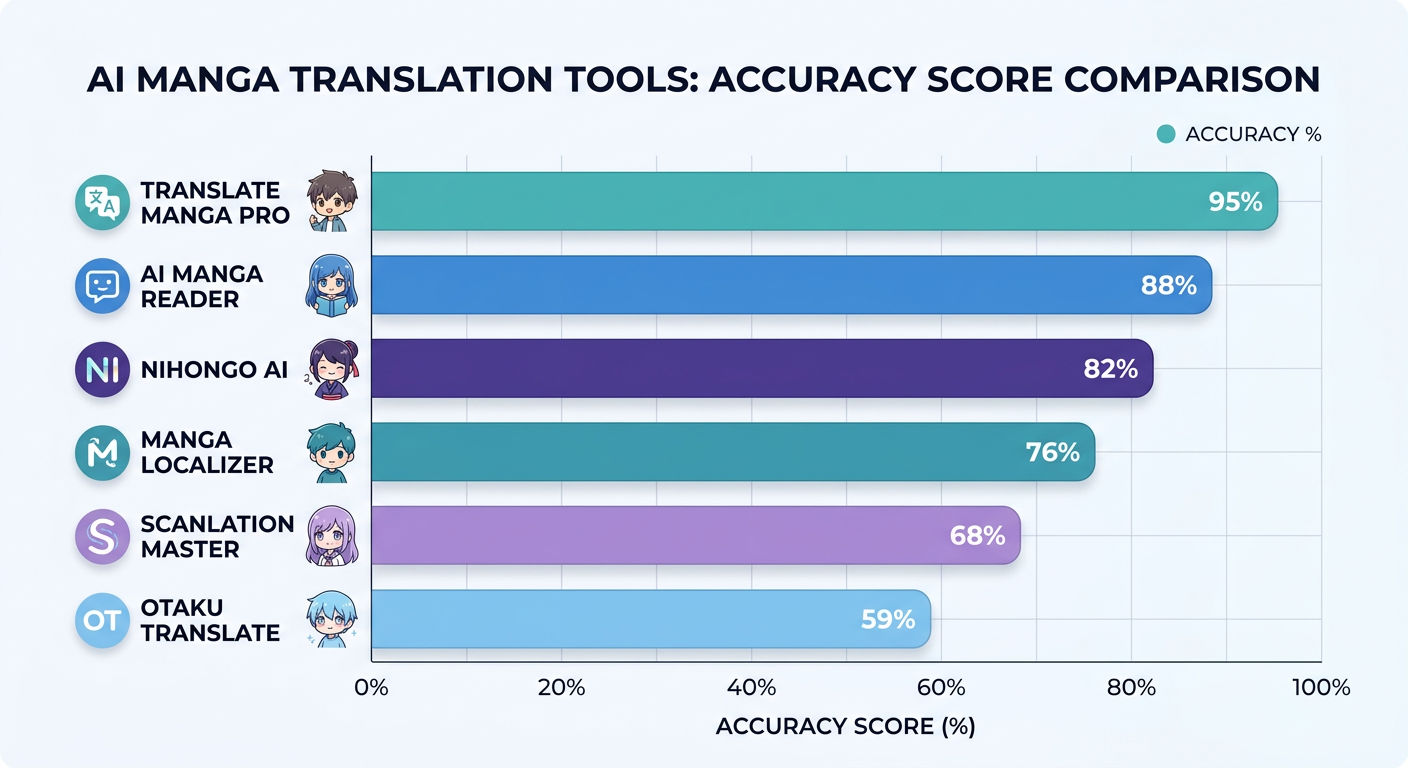

Tool Comparison

| Tool | OCR Accuracy | Layout Preservation | SFX Translation | Free Tier |

|---|---|---|---|---|

| Google Translate (image) | Moderate | Poor | No | Yes |

| DeepL + manual | Good | Manual | No | Limited |

| AI-native manga translator | Best | Yes | Yes | Yes |

The gap between general image translation and purpose-built manga tools is significant, especially for:

- Vertical Japanese text

- Sound effects and onomatopoeia

- Multi-panel context (character names, running jokes, pronoun tracking)

What AI Manga Translation Still Gets Wrong

Even the best tools have consistent failure modes:

- Cultural references that need localization, not just translation (puns, wordplay, honorifics)

- SFX in complex artwork where the text is inseparable from the art

- Low-quality scans where OCR struggles with artifacts

- First chapters of new series where the AI hasn't seen enough context to understand character voices

Human editors still matter for anything aimed at publication quality. For personal reading, AI translation has crossed the threshold of "good enough" for most series.

Try It

Our AI manga translator handles Japanese-to-English translation with automatic OCR, context-aware translation, and speech bubble rendering.