OpenCode: Free Cursor Alternative for Vibe Coding Locally With No Subscriptions

OpenCode is a free, open-source Cursor alternative that runs in your terminal with local Ollama models or any LLM. No credit limits, no $25/month plans — just vibe coding that actually works offline.

The Credit Anxiety Is Real

You're in flow. You've got a feature half-built, the AI is finally understanding your codebase, and then — you hit your credit limit.

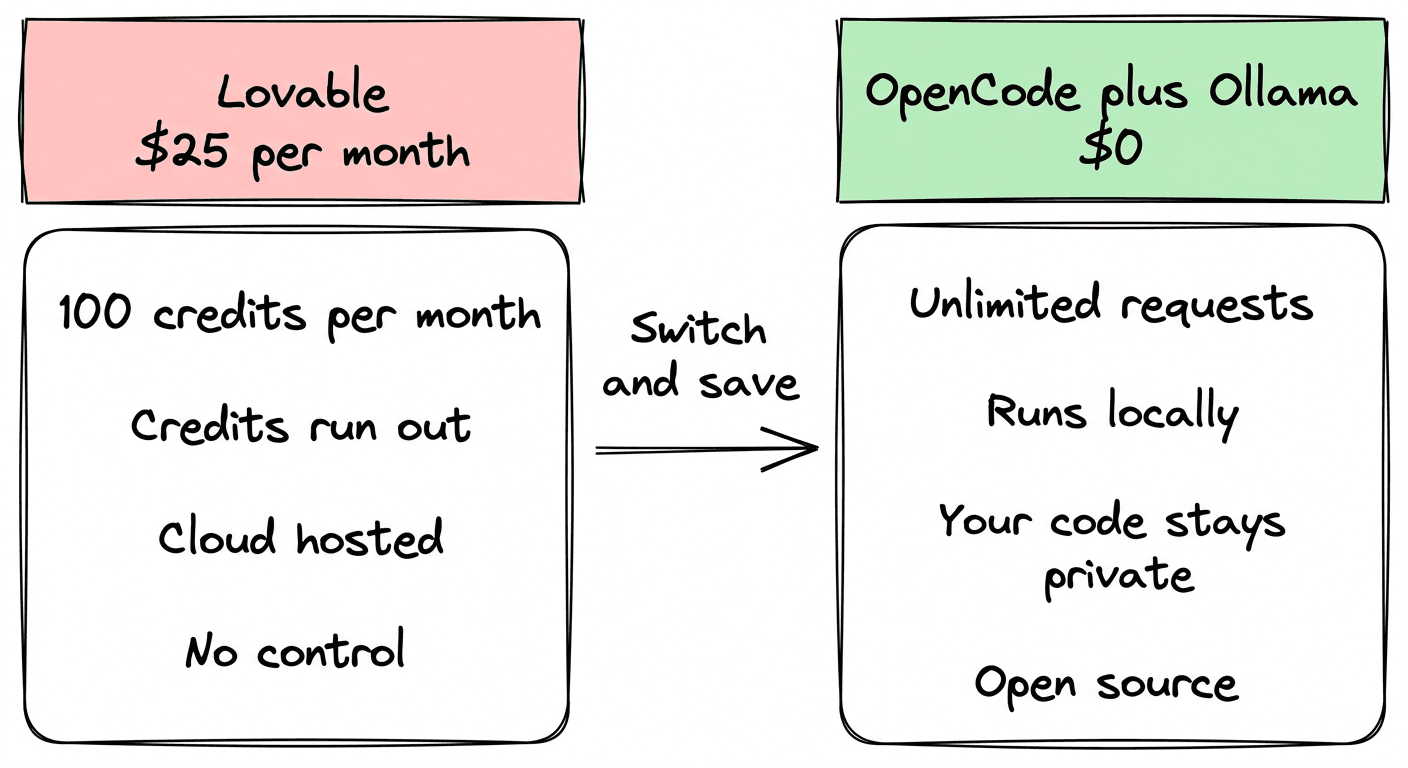

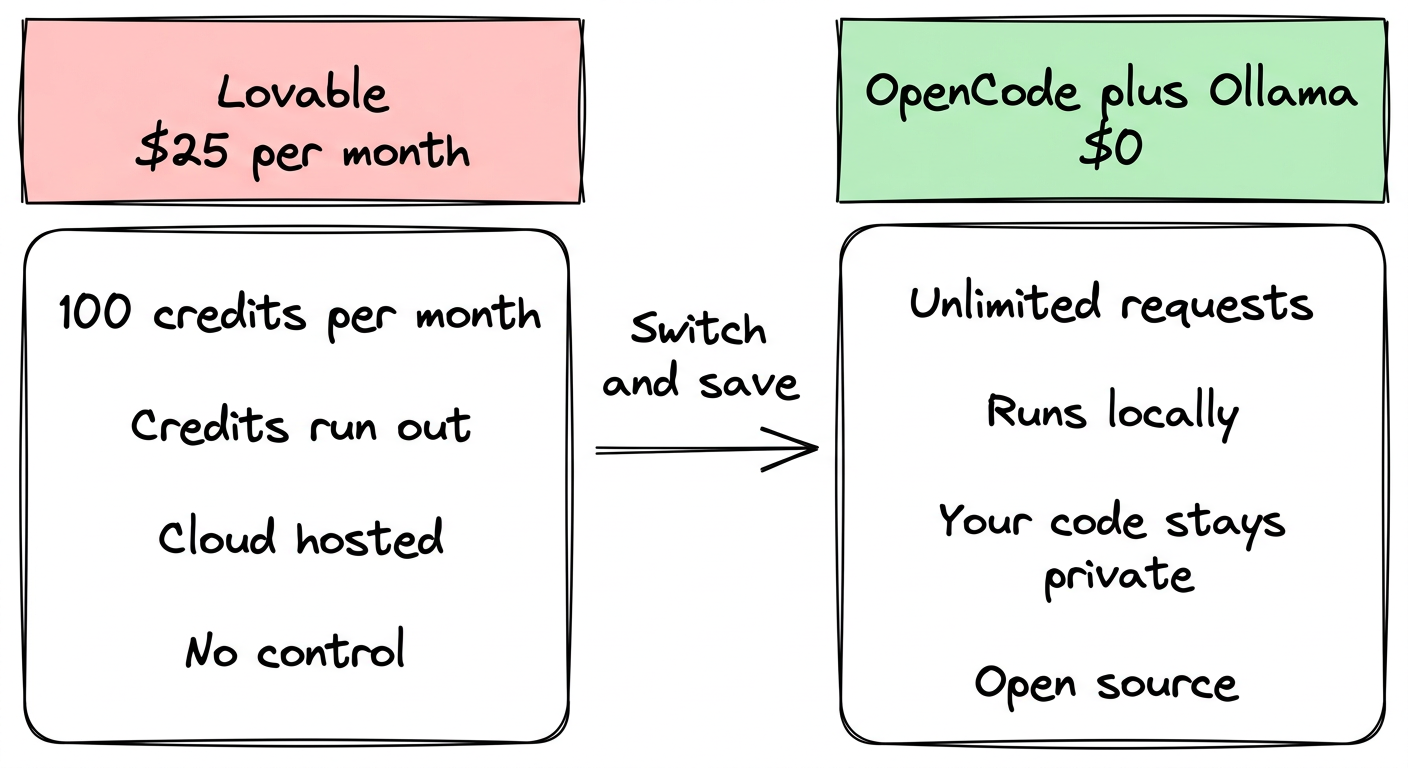

If you've used Lovable, Bolt, or any of the browser-based vibe coding tools, you know this feeling. Lovable's Pro plan is $25/month for 100 credits. That sounds like a lot until you're three features deep on a weekend project and you've burned through 40 credits trying to get a modal to close correctly.

The $50 Business plan gives you the same 100 credits. The Enterprise plan exists if you want to ask a salesperson.

Meanwhile, you're building someone else's SaaS on someone else's infrastructure, with a meter running on every prompt.

There's a better way. It's called OpenCode. It's open source, runs locally in your terminal, works with local models that cost nothing, and has 120K GitHub stars from developers who figured this out before you did.

What OpenCode Actually Is

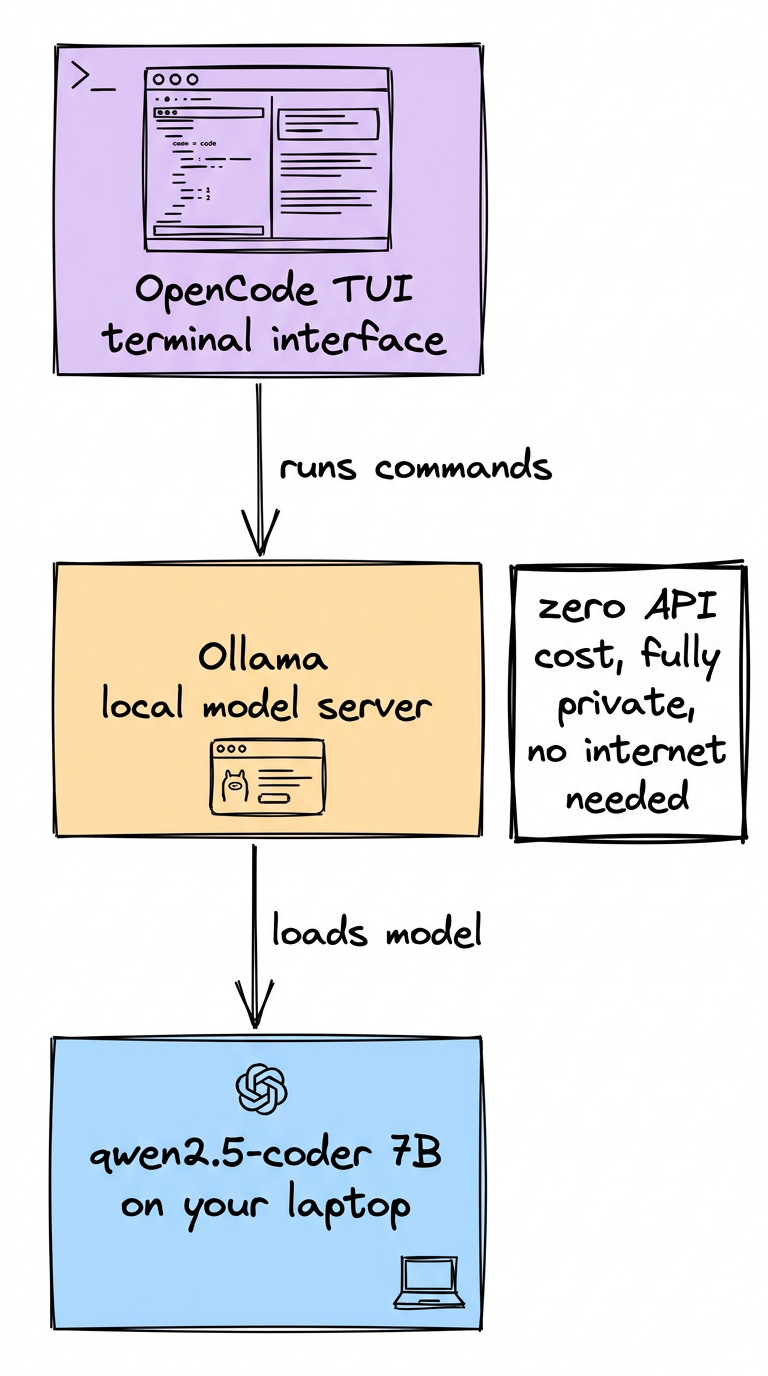

OpenCode is an AI coding agent — like Claude Code or Cursor's agent mode — but fully open source and not locked to any one AI provider.

It runs in your terminal as a TUI (terminal user interface), has a desktop app in beta, and integrates with your IDE. You open it in a project directory, describe what you want to build, and it reads your files, writes code, runs commands, and iterates — same loop as any other vibe coding tool.

The difference is what sits underneath:

- No vendor lock-in — connect OpenAI, Anthropic, Google, or any of 75+ providers

- Local model support — run Ollama, llama.cpp, or LM Studio on your own machine

- Free models on Zen — their own gateway includes completely free models

- Your code stays on your machine — nothing is stored or sent to OpenCode's servers

- 100% open source — read the code, self-host it, fork it, contribute to it

The question isn't whether OpenCode is good. It is. The question is which model you point it at and how much that costs — and for a lot of use cases, the answer is zero.

The Free Paths: How to Actually Pay Nothing

Option 1: Local Models with Ollama (Fully Free, Forever)

The cleanest free path is running a model locally via Ollama.

Install Ollama:

# macOS

brew install ollama

# Linux

curl -fsSL https://ollama.ai/install.sh | sh

Pull a model:

# Good balance of quality and speed on most machines

ollama pull qwen2.5-coder:7b

# Smaller, faster on older hardware

ollama pull deepseek-coder:1.3b

# Bigger, better if you have 16GB+ RAM

ollama pull qwen2.5-coder:32b

Configure OpenCode to use it — add this to your opencode.json:

{

"$schema": "https://opencode.ai/config.json",

"provider": {

"ollama": {

"name": "Ollama",

"type": "ollama",

"options": {

"baseURL": "http://localhost:11434/api"

}

}

},

"model": "ollama/qwen2.5-coder:7b"

}

Start Ollama, open OpenCode, and you're running a capable coding agent with zero API costs. Your laptop is the compute.

What to expect: 7B models are solid for straightforward tasks — UI components, bug fixes, writing functions from clear descriptions. For complex multi-file refactors or architecture decisions, a 32B model or a paid API gives noticeably better results.

Option 2: OpenCode Zen's Free Models

OpenCode runs their own model gateway called Zen. Most of the models on it cost money (pay-as-you-go, no markup, starting around $0.50/1M input tokens for the cheapest options). But Zen includes two models that are genuinely free:

- Big Pickle — free tier model, good for quick tasks

- MiniMax M2.5 Free — free tier, useful for lighter workloads

To use them, sign up at opencode.ai/zen, add billing details (required even for free models), get your API key, and run /connect inside OpenCode.

These are free now because OpenCode is using them to improve the platform. They may not be free forever, but they exist today.

Option 3: GitHub Copilot (If You Already Have It)

If you're already paying for GitHub Copilot ($10/month or included with some plans), OpenCode connects to it directly. You're not paying extra — you're just using the subscription you already have in a better interface.

Run /connect in OpenCode, select GitHub Copilot, authenticate, and you're using OpenCode's TUI with Copilot's model access.

Installing OpenCode

# One-liner

curl -fsSL https://opencode.ai/install | bash

# Or via npm

npm i -g opencode-ai@latest

# macOS (recommended — always up to date)

brew install anomalyco/tap/opencode

# Or grab the desktop app

# https://opencode.ai/download

Verify it works:

opencode --version

Open it in any project directory:

cd your-project

opencode

The Vibe Coding Workflow in OpenCode

OpenCode has two built-in agents you switch between with Tab:

- build — full access, writes files, runs bash commands, the default for actually shipping code

- plan — read-only, analyzes and plans without touching anything

The workflow for vibe coding:

- Open OpenCode in your project directory

- Switch to plan mode, describe what you want to build

- Read the plan, correct anything that's wrong

- Switch to build mode, say "go"

- Watch it work

The plan → build split is something Lovable and Bolt don't really give you. You're either generating or you're not. Here, you can explore and reason before you commit — especially useful when you don't want the agent to blow up a file you care about.

Running parallel sessions is also built in. Open multiple OpenCode sessions in the same project to work on independent features simultaneously. One session fixing a bug, another building a new component. This is genuinely faster than single-threaded vibe coding.

What You're Getting vs. Paying For in Other Tools

Let's be direct about the math.

Lovable — $25/month for 100 credits. Credits don't roll over (on the base plan). 100 credits can evaporate in a single afternoon of active building. Your project lives in their cloud. $50/month for the same credit limit with team features.

Bolt.new (StackBlitz) — Similar credit-based model. $20/month for the entry paid tier. Also cloud-hosted.

Cursor — $20/month. "Fast" requests are limited, then throttled to slower models. Not really a vibe coder — more of an IDE with AI features.

OpenCode + Ollama — $0/month. Unlimited requests. Runs on your machine. Your code never leaves your computer. Open source — you can audit exactly what it does.

OpenCode + Zen (Gemini Flash) — ~$0.50 per 1 million input tokens, $3.00 per 1 million output tokens. For casual use: $2-5/month. For heavy use: maybe $10-15/month. Still a fraction of $25-50/month with no credit ceiling.

The credit-based tools make sense for one specific user: someone who wants a fully managed, no-setup experience and doesn't mind the cost. If you're technical enough to be reading this, you're technical enough to get more value from OpenCode.

When Local Models Are Good Enough

The common objection: "But the models aren't as good."

True — a 7B model running on your laptop isn't Claude Sonnet. But a lot of vibe coding tasks don't require Claude Sonnet:

- Building a CRUD UI from a data model

- Writing form validation logic

- Adding a new route to an existing API

- Fixing a specific bug you can describe precisely

- Generating boilerplate for a well-understood pattern

Where local models fall short:

- Complex architectural decisions across many files

- Ambiguous requirements that need interpretation

- Debugging subtle, multi-system issues

- Anything requiring broad world knowledge

The practical approach: use a local model for the work it handles well — fast, free, private — and connect to a paid model for the sessions that actually need it. OpenCode makes switching models trivial. One /connect command, one config change.

LSP Support: The Feature Nobody Talks About

Most vibe coding tools work with raw text. They write code but don't understand your project's types, imports, or errors at a language level.

OpenCode has built-in Language Server Protocol (LSP) support. It automatically loads the right LSP for your stack. This means the model sees type errors, import paths, and project context the same way your IDE does.

For TypeScript projects especially, this makes a noticeable difference. The agent isn't guessing at types — it's reading them.

Get Started Now

# 1. Install OpenCode

brew install anomalyco/tap/opencode

# 2. Install Ollama for free local models

brew install ollama

# 3. Pull a coding model

ollama pull qwen2.5-coder:7b

# 4. Add opencode.json to your project

cat > opencode.json << 'EOF'

{

"$schema": "https://opencode.ai/config.json",

"provider": {

"ollama": {

"name": "Ollama",

"type": "ollama",

"options": {

"baseURL": "http://localhost:11434/api"

}

}

},

"model": "ollama/qwen2.5-coder:7b"

}

EOF

# 5. Start coding

cd your-project && opencode

That's a fully functional AI coding agent running locally, for free, with no credit limits, in under 10 minutes.

The Honest Take

OpenCode isn't going to replace your judgment. Vibe coding tools — free or paid — still require someone who can tell a good output from a bad one, catch the subtle bugs, and make architectural decisions.

What OpenCode does is remove the artificial ceiling that subscription tools put on your iteration speed. You're not rationing prompts. You're not watching a credit counter. You're just building.

If you're already paying $25/month for Lovable, try OpenCode with a local model for a week. You'll probably cancel.

If you want to see how this kind of local-first, cost-effective tooling fits into a real development workflow — or how we use it when building client projects — book a free AI audit. We'll show you what our actual setup looks like and whether something similar makes sense for you.