Multi-Agent AI Chat: What It Is and How to Build One

Multi-agent AI systems use multiple specialized models working together instead of one model doing everything. Here is what that means in practice and when you actually need it.

A single AI model is good at a lot of things. But "good at a lot of things" isn't the same as "great at any specific thing." When you need a system that researches, reasons, writes, and acts — all in one conversation — a single model hits its limits fast.

Multi-agent architecture is the solution. Instead of one model doing everything, you have specialized agents that handle specific tasks and hand off to each other.

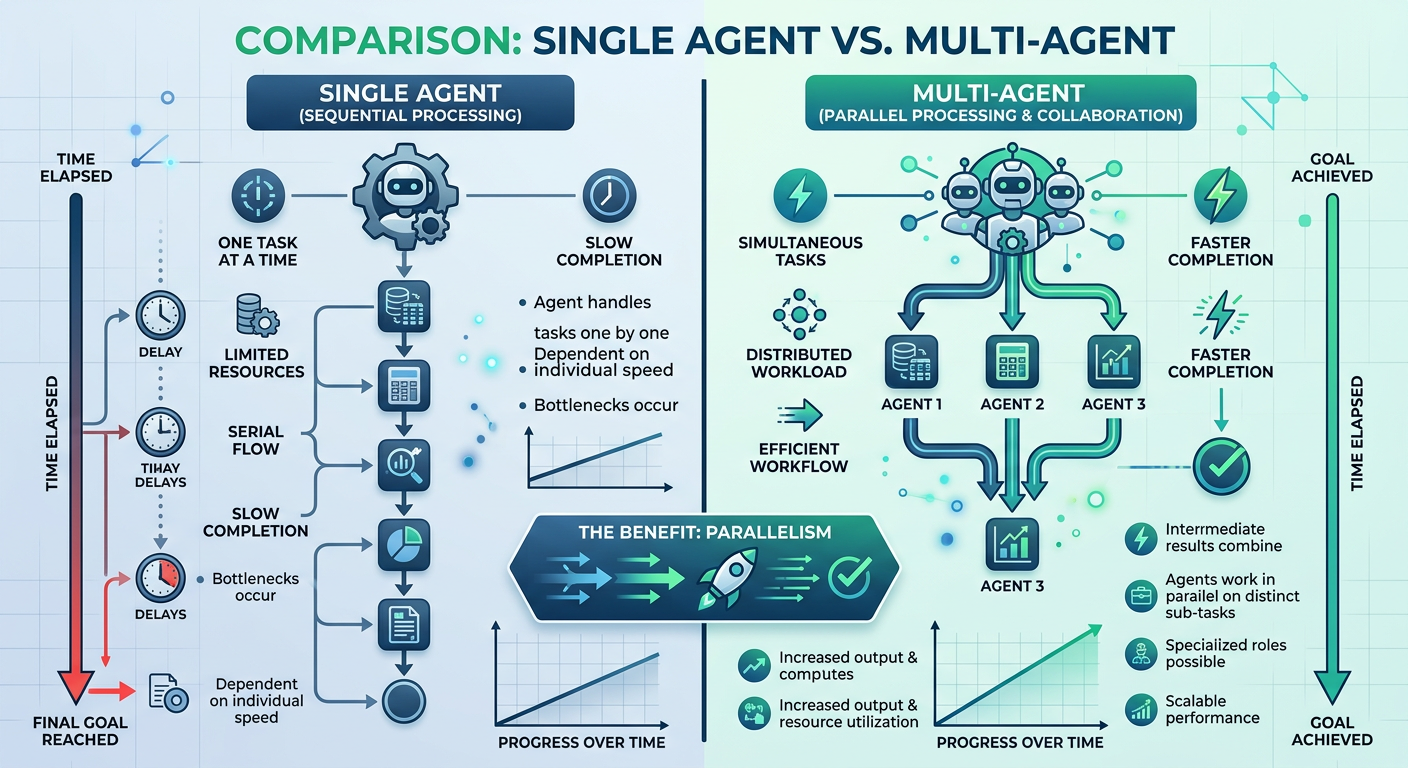

Single Agent vs Multi-Agent: The Real Difference

Single agent:

- One model, one context window

- Does everything sequentially

- Context gets crowded fast on complex tasks

- One point of failure

Multi-agent:

- Orchestrator agent routes tasks to specialists

- Agents run in parallel where possible

- Each agent has a focused context

- Better results on complex, multi-step tasks

The tradeoff is complexity. A single-agent system is easier to build and debug. Multi-agent makes sense when the task has genuinely distinct phases that benefit from specialization.

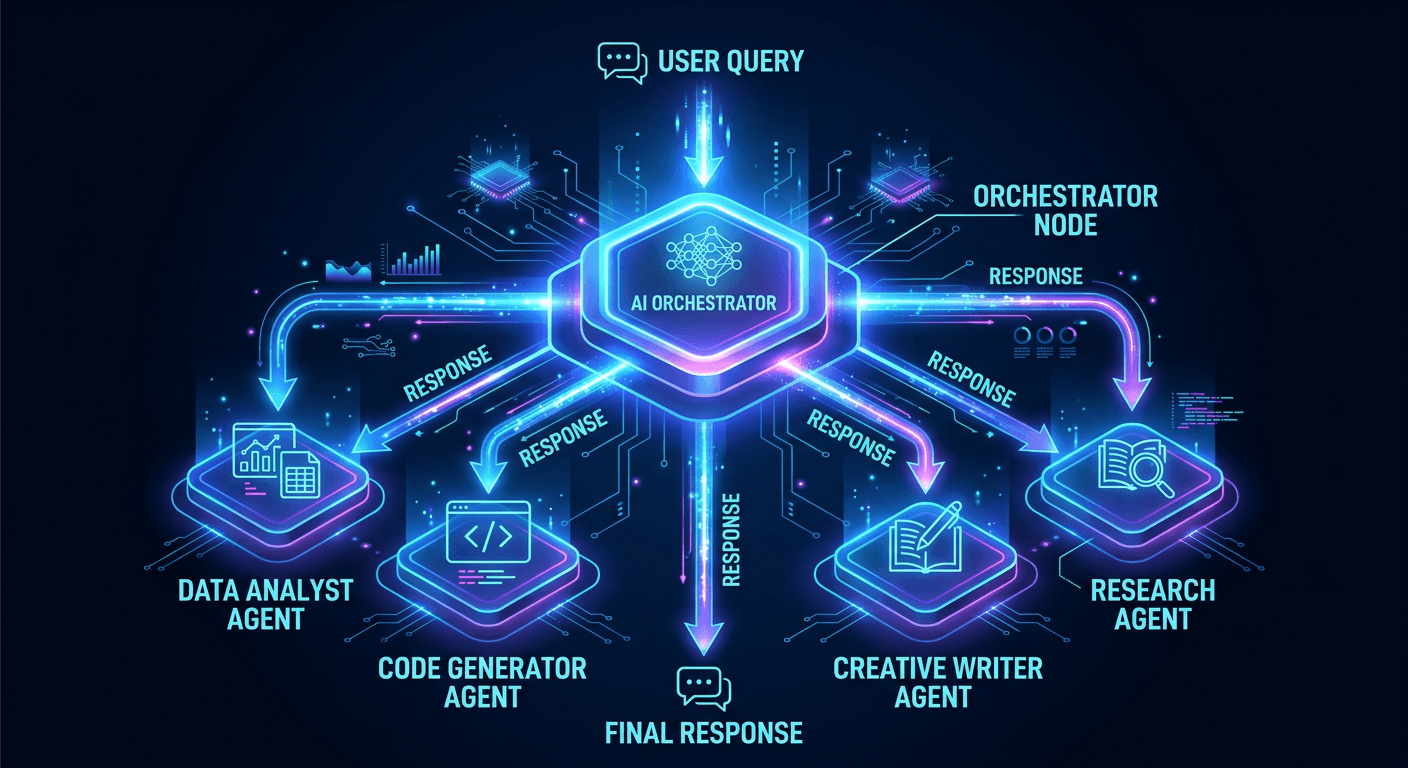

The Core Architecture

A typical multi-agent chat system has:

1. Orchestrator Agent Receives the user's request, breaks it into subtasks, routes each to the right specialist, and assembles the final response. This is the only agent the user interacts with directly.

2. Specialist Agents Each handles one type of task:

- Research agent — searches the web or a knowledge base

- Code agent — writes and runs code

- Summarization agent — condenses long documents

- Memory agent — maintains conversation history and user context

3. Tool Layer Agents use tools to take actions: web search, code execution, API calls, database queries. The tool layer is what separates a chat interface from an autonomous system.

A Real Example: Customer Support Multi-Agent

Here's what a multi-agent customer support system looks like:

User: "My order #4821 hasn't arrived and it's been 2 weeks"

Orchestrator receives request

→ Routes to: Order Lookup Agent (fetch order status from Shopify)

→ Routes to: Policy Agent (check refund/replacement policy)

→ Synthesizes both results

→ Responds: "Your order shipped on Feb 24 but shows no tracking updates

since Feb 26. Our policy covers replacement after 10 business days.

I can initiate a replacement now — would you like me to?"

A single agent could do this too, but it would need the Shopify API credentials, policy documents, and conversation context all crammed into one context window — plus it can't parallelize the lookup and policy check.

How to Build One

The fastest path to a multi-agent system today:

Option 1: Claude Code with agent mode

Claude Code's built-in @general subagent handles multi-step research and task delegation natively. For most use cases, this is enough.

Option 2: LangGraph or CrewAI Python frameworks purpose-built for multi-agent orchestration. LangGraph gives you fine-grained control over agent state and routing. CrewAI has a higher-level API.

Option 3: Build from scratch with the Claude API Use the Messages API to build your own orchestrator. Each agent is a separate API call. You control routing logic, context management, and tool access explicitly.

def orchestrate(user_message: str) -> str:

# Step 1: Route the request

route = routing_agent(user_message)

# Step 2: Run specialist agents in parallel

results = run_parallel([

research_agent(route.research_task),

code_agent(route.code_task),

])

# Step 3: Synthesize

return synthesis_agent(results)

When You Don't Need Multi-Agent

Multi-agent adds complexity. Don't reach for it unless:

- The task has genuinely distinct phases (research → reason → write → act)

- A single model's context window is genuinely too small

- Parallelism would meaningfully speed up the response

- You need different permissions or tool access for different subtasks

For most chatbots and simple Q&A systems, a single well-prompted model is faster, cheaper, and easier to debug.

Want to see a live multi-agent system? Check out our multi-agent chat demo →