How to Build a Multi-Agent AI Architecture (With Real Examples)

Multi-agent AI systems let you break complex tasks into specialized components that work in parallel. Here is how to design, build, and debug one from scratch.

A single LLM is good at following instructions. A multi-agent system is good at completing work — real, multi-step work that requires different capabilities, tools, and context at different stages.

The distinction matters when you're trying to automate anything non-trivial: customer support that looks up orders and drafts responses, content pipelines that research and write and format, lead gen tools that find and enrich and personalize.

This is a practical guide to building these systems.

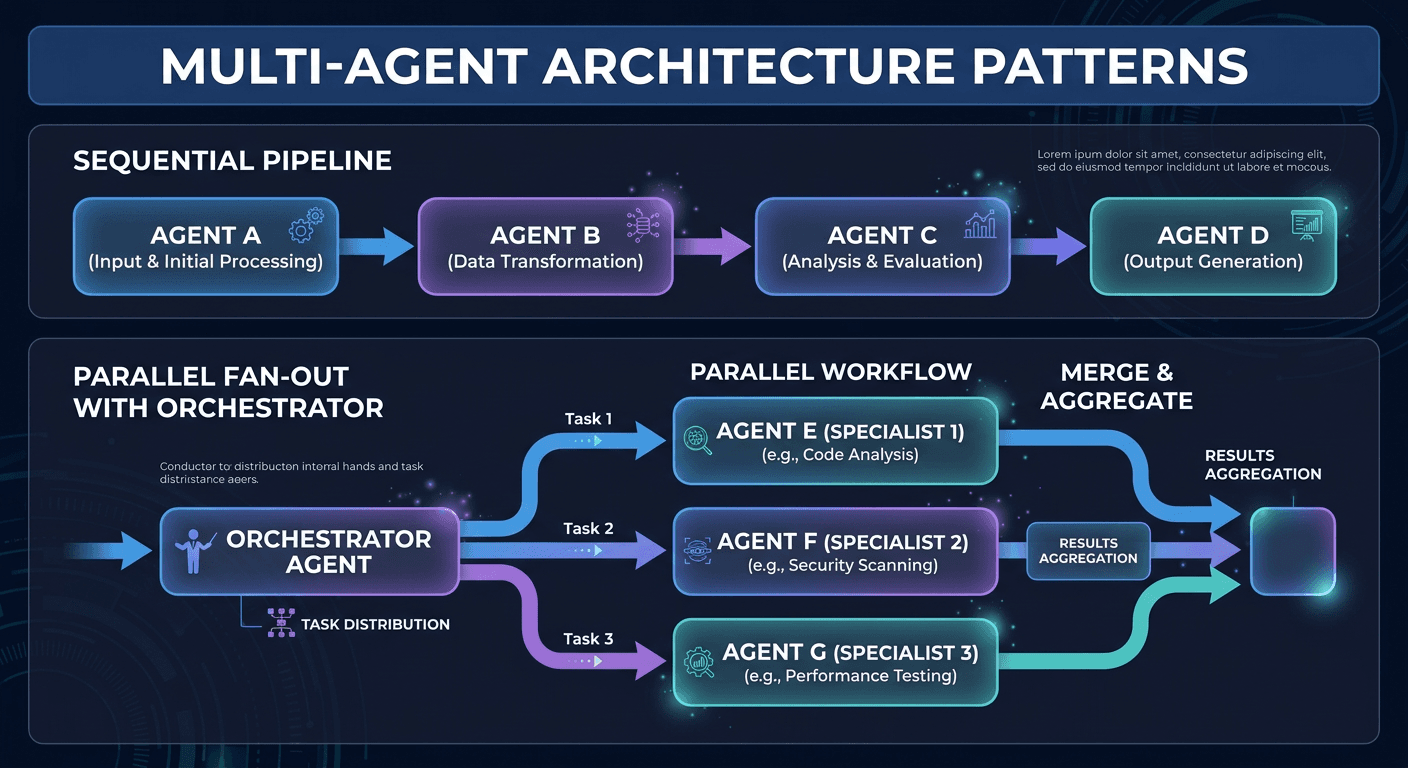

The Two Core Patterns

Pattern 1: Sequential Pipeline

Each agent passes its output to the next. Use this when steps are genuinely dependent — step 2 can't start until step 1 finishes.

Research Agent → Analysis Agent → Writing Agent → Review Agent

Example: Blog post generation

- Research agent finds sources and facts

- Analysis agent identifies key points and angles

- Writing agent drafts the post

- Review agent checks for accuracy and tone

Pattern 2: Parallel Fan-out

The orchestrator sends tasks to multiple agents simultaneously, then collects results. Use this when steps are independent.

┌→ Market Research Agent ─┐

Orchestrator ────├→ Competitor Agent ───────├→ Synthesis Agent

└→ Customer Data Agent ───┘

Example: Competitive analysis

- Agent 1: searches for market size data

- Agent 2: analyzes competitor pricing

- Agent 3: pulls customer review sentiment

All three run in parallel. Results are synthesized into one report.

Building It With the Claude API

Here's a minimal working orchestrator:

import anthropic

from concurrent.futures import ThreadPoolExecutor

client = anthropic.Anthropic()

def run_agent(system_prompt: str, user_message: str, tools: list = None) -> str:

"""Run a single agent and return its output."""

response = client.messages.create(

model="claude-sonnet-4-6",

max_tokens=2048,

system=system_prompt,

messages=[{"role": "user", "content": user_message}],

tools=tools or []

)

return response.content[0].text

def orchestrate(user_request: str) -> str:

# Step 1: Routing agent decides what to do

routing_response = run_agent(

system_prompt="You are a router. Analyze the request and output JSON with keys: needs_research (bool), needs_code (bool), needs_data (bool)",

user_message=user_request

)

route = json.loads(routing_response)

# Step 2: Run needed agents in parallel

futures = {}

with ThreadPoolExecutor() as executor:

if route["needs_research"]:

futures["research"] = executor.submit(

run_agent, RESEARCH_SYSTEM_PROMPT, user_request, [web_search_tool]

)

if route["needs_code"]:

futures["code"] = executor.submit(

run_agent, CODE_SYSTEM_PROMPT, user_request, [code_execution_tool]

)

if route["needs_data"]:

futures["data"] = executor.submit(

run_agent, DATA_SYSTEM_PROMPT, user_request, [database_tool]

)

results = {k: v.result() for k, v in futures.items()}

# Step 3: Synthesis agent assembles final response

return run_agent(

system_prompt="Synthesize these agent outputs into a clear, coherent response.",

user_message=f"User request: {user_request}\n\nAgent results: {json.dumps(results)}"

)

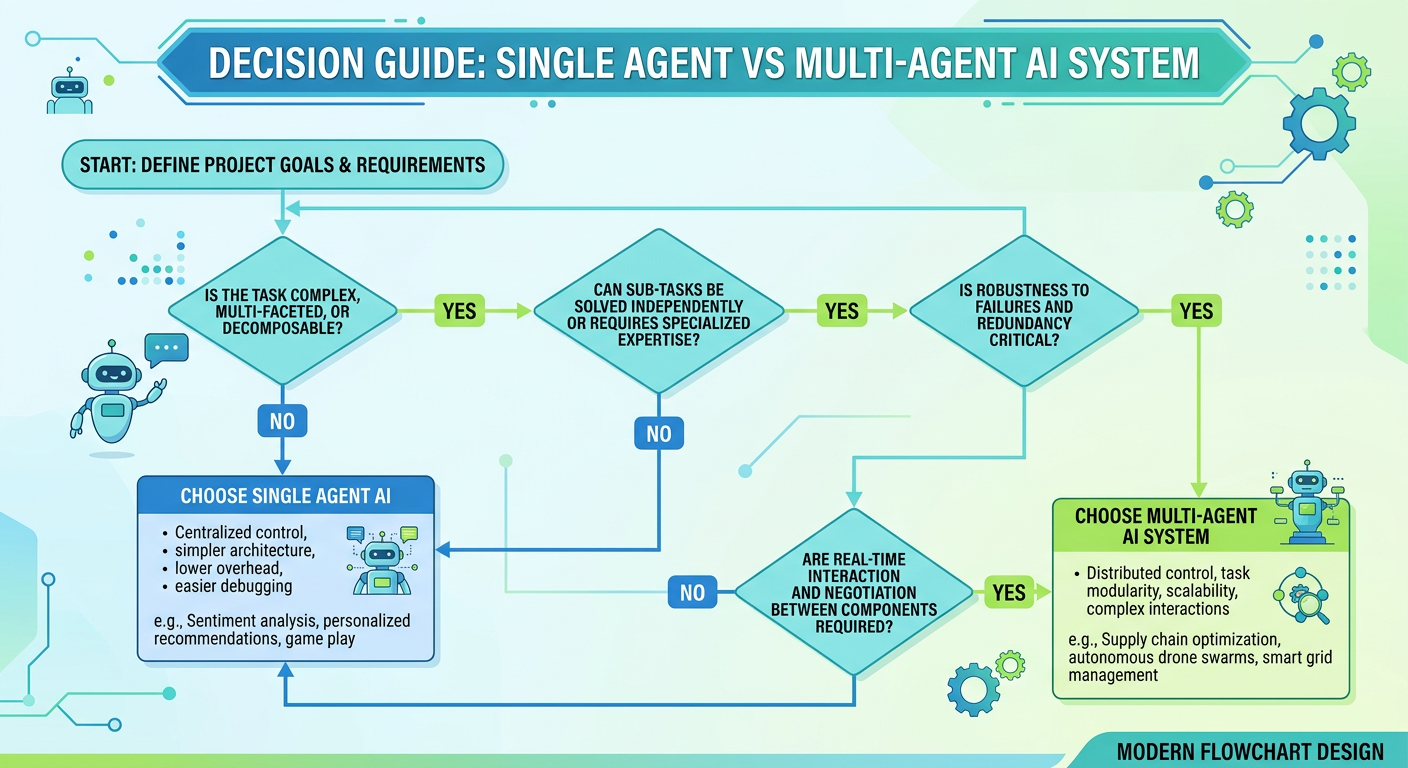

When to Use Which Pattern

| Situation | Recommendation |

|---|---|

| Simple Q&A, one data source | Single agent |

| Sequential workflow, step B needs step A's output | Sequential pipeline |

| Independent parallel tasks | Fan-out pattern |

| Complex task with both sequential and parallel phases | Hybrid |

| Real-time, latency-sensitive | Single agent (multi-agent adds latency) |

Designing Agent System Prompts

Each agent's system prompt should define:

- Role: What this agent is and isn't responsible for

- Output format: Exactly what structure the orchestrator expects

- Failure behavior: What to return if it can't complete the task

- Scope limits: What to refuse (prevents agents from doing each other's jobs)

Example — Research Agent:

You are a research agent. Your only job is to find factual information.

Given a research question, return a JSON object:

{

"facts": ["fact 1", "fact 2", ...],

"sources": ["url or description"],

"confidence": "high|medium|low"

}

Do NOT write prose, make recommendations, or generate code.

If you cannot find reliable information, return confidence: "low" and explain why.

Debugging Multi-Agent Systems

The hardest part of multi-agent systems isn't building them — it's figuring out what went wrong.

Essential practices:

Log every agent call. Store input, output, model, latency, and token count for every agent invocation. You need this to debug failures.

Test agents individually. Before testing the full system, test each agent with edge case inputs. A bad research agent will ruin every downstream step.

Add confidence signals. Ask agents to flag when they're uncertain. An orchestrator that knows an agent is uncertain can ask for clarification or escalate rather than proceeding with bad data.

Implement fallbacks. If an agent fails or returns low confidence, have a fallback path — either a simpler agent, a cached result, or a human escalation.

Real-World Example: Lead Gen Multi-Agent

Our lead gen tool for an agency client uses three agents:

- Enrichment Agent — takes a company name, finds LinkedIn URL, employee count, tech stack, recent news

- Fit Scoring Agent — scores the lead against the client's ICP (ideal customer profile)

- Personalization Agent — writes a custom first line for the outreach email based on enrichment data

Agents 1 runs first (sequential), then agents 2 and 3 run in parallel on its output. Total time: ~8 seconds per lead. Before this system, the same workflow took 50 minutes manually.

Want us to build a multi-agent system for your workflow? Book a free AI audit →