How We Use a GSC MCP Server and DataForSEO to Generate SEO-Ready Blog Posts Automatically

A behind-the-scenes look at how Maximal Studio uses Google Search Console MCP and DataForSEO API inside Claude Code to turn real search data into fully-structured blog drafts — without a content team.

The Problem With Most "AI Content" Workflows

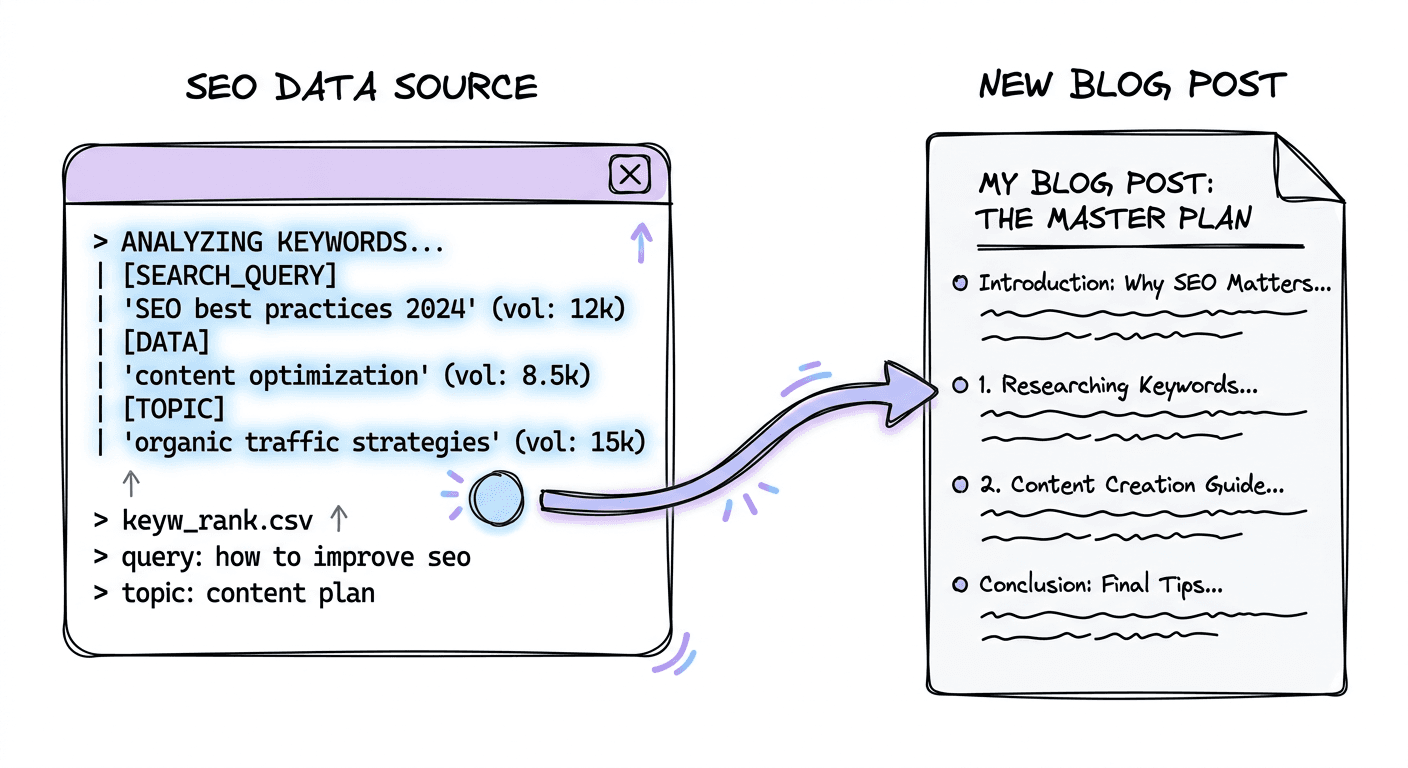

Most people's AI content workflow looks like this:

- Open ChatGPT

- Type "write me a blog post about X"

- Get something generic

- Post it and wonder why it doesn't rank

The output sounds fine. It reads fine. But it's built on nothing — no keyword data, no search intent, no understanding of what your site already ranks for or where the gaps are.

We don't do it that way.

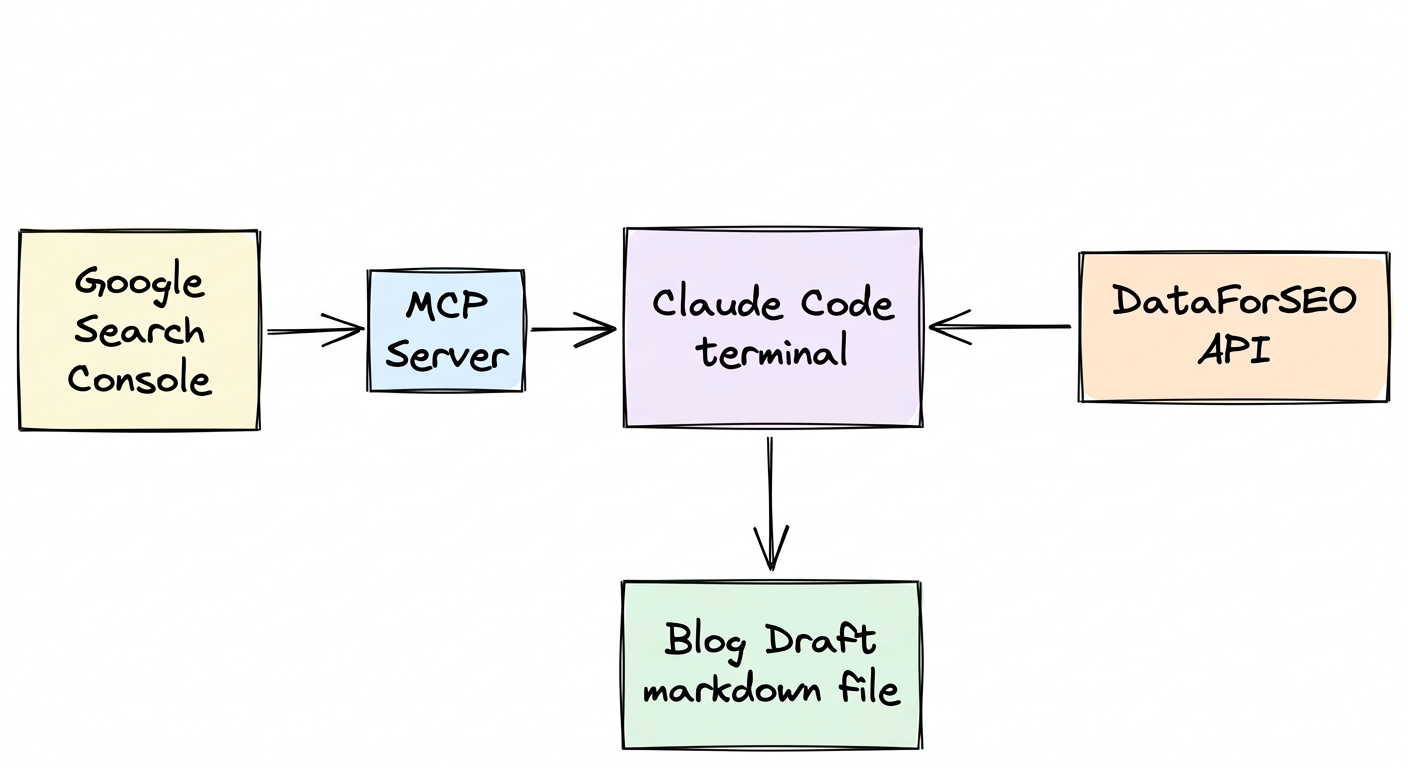

At Maximal Studio, our blog content pipeline starts with real data from two sources: Google Search Console (to see what's actually happening with our own domain) and DataForSEO (to see what the broader search landscape looks like). Both are piped directly into Claude Code via MCP and a custom skill — so the AI writing the content is the same one reading the data.

Here's exactly how it works.

What We're Solving For

Before I explain the setup, it helps to understand the problem we were trying to solve.

We needed blog posts that:

- Target keywords with actual search volume — not gut feel

- Match search intent (informational, commercial, navigational)

- Don't cannibalize existing pages we already rank for

- Are structured around what Google is already rewarding in our niche

- Ship fast enough to actually publish consistently

Doing this manually means switching between Search Console, a keyword tool, a SERP checker, a content brief tool, and then a writing tool. Every context switch is friction. Friction kills consistency.

Our answer was to collapse all of that into a single agentic workflow where Claude has eyes on the data directly.

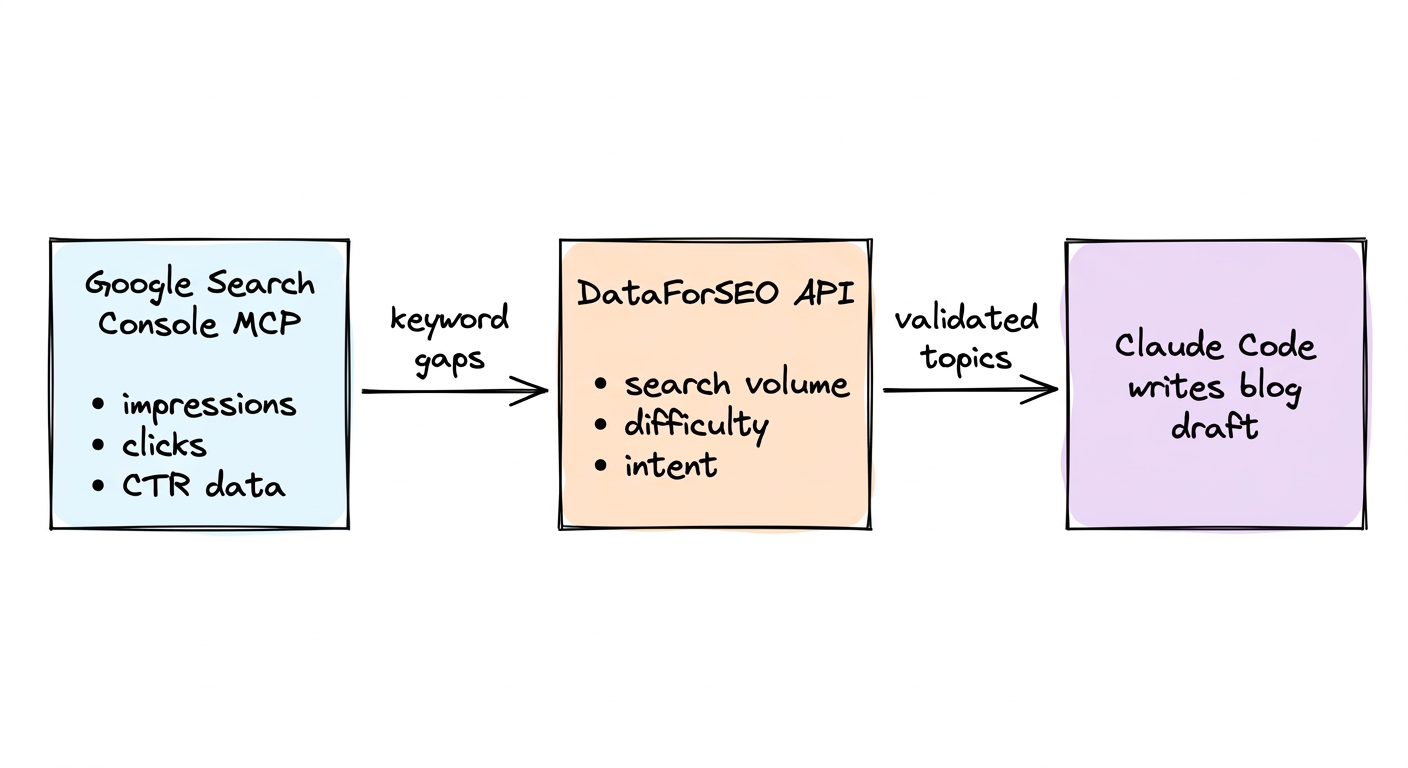

Part 1: The GSC MCP Server

The Google Search Console MCP server connects Claude Code directly to our Search Console data. No exporting CSVs. No copy-pasting into a spreadsheet.

When we're planning a blog post, Claude can query:

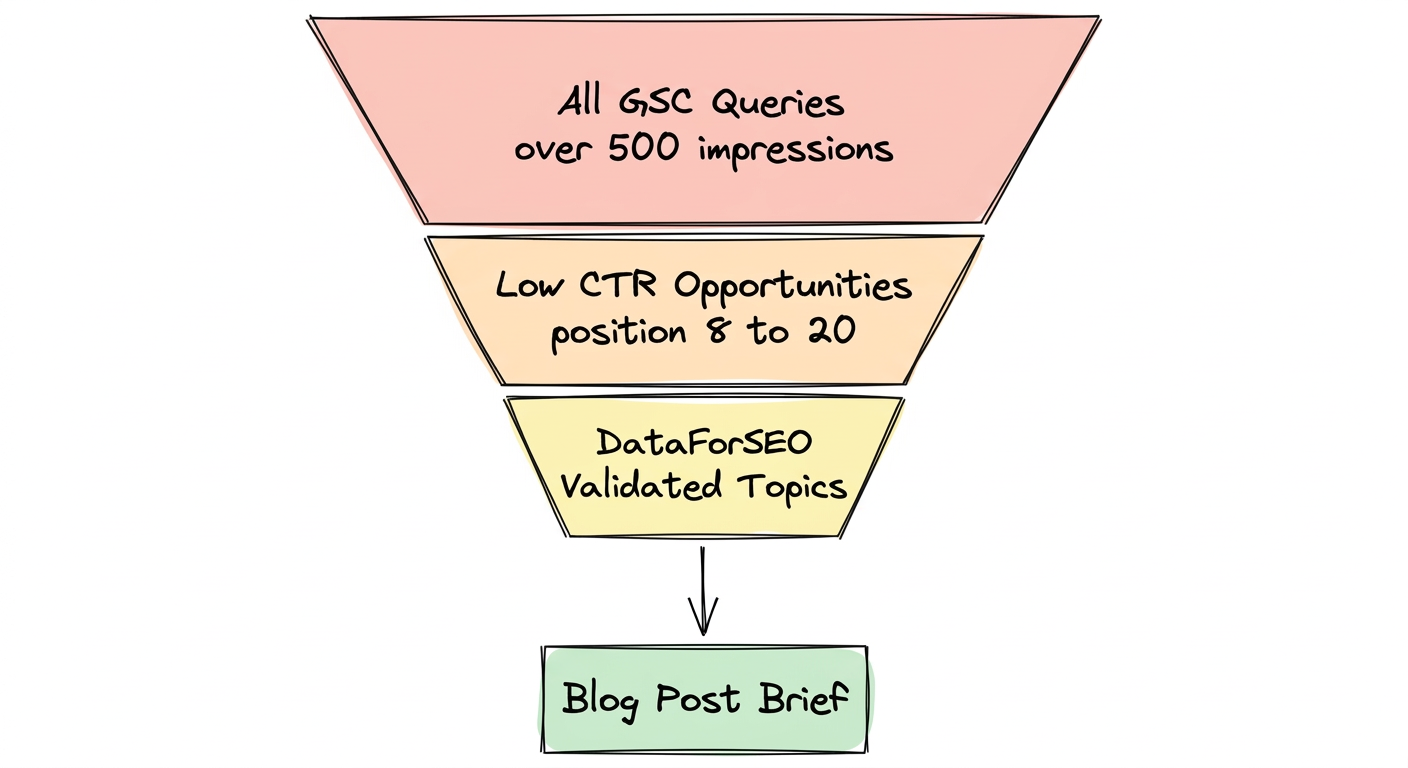

- Which queries are we appearing for but not ranking well on? (Impressions high, clicks low — position 8–20, the classic opportunity zone)

- What pages are already getting traction? (So we don't write something that competes with ourselves)

- What's trending over the last 28 days vs. the prior period? (Catching momentum before it peaks)

- Which URLs have indexing issues? (So we don't waste time creating content that won't get crawled)

A typical query we run at the start of a content session:

"Show me queries where we have more than 500 impressions but less than 20 clicks over the last 28 days, sorted by impressions."

That list is the raw material. Those are keywords Google has already told us our site is relevant for — we're just not converting the visibility into traffic yet. The content we write targets exactly those gaps.

Part 2: DataForSEO for Keyword and SERP Intelligence

GSC tells us what's happening on our own domain. DataForSEO tells us what's happening in the broader market.

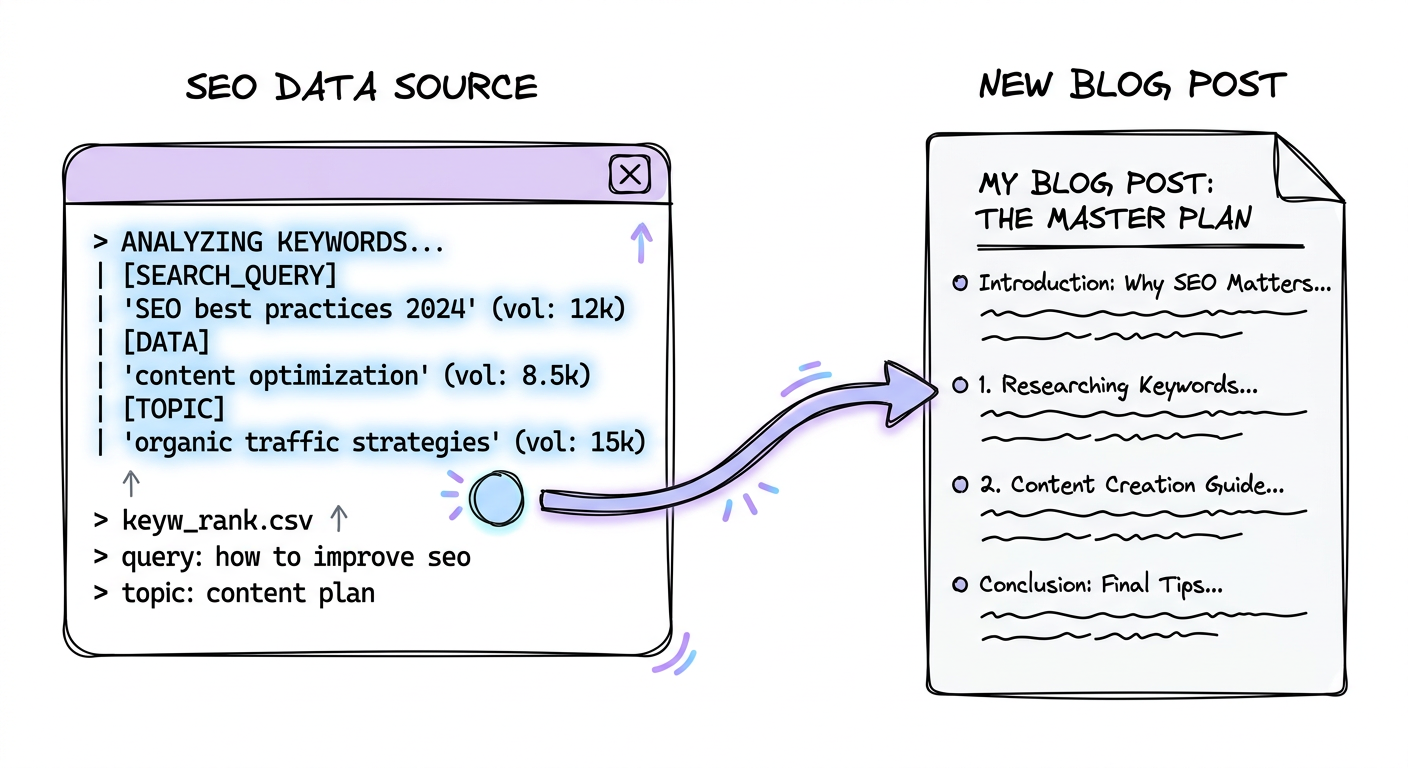

We use the DataForSEO API (via a /dataforseo skill in Claude Code) to pull:

- Search volume and keyword difficulty for target queries

- SERP features — is there a featured snippet? A people-also-ask box? Video results? This changes how we structure the post

- Top-ranking competitor pages for a given query — what are they covering, how long are they, what questions do they answer

- Related keywords and long-tail variations — to find the version of a topic with high intent and low competition

- Keyword clustering — grouping semantically related terms so one post covers a full topic rather than chasing a single phrase

In practice, once we have a shortlist of opportunity keywords from GSC, we pass each one through DataForSEO to validate:

- Is there enough monthly search volume to be worth targeting?

- How hard is the competition? (Domain rating of top 10 results)

- What's the search intent — are people looking for information or trying to buy something?

- What SERP features should we optimize for?

If a keyword passes that filter, it becomes a brief. If it doesn't, we move to the next one.

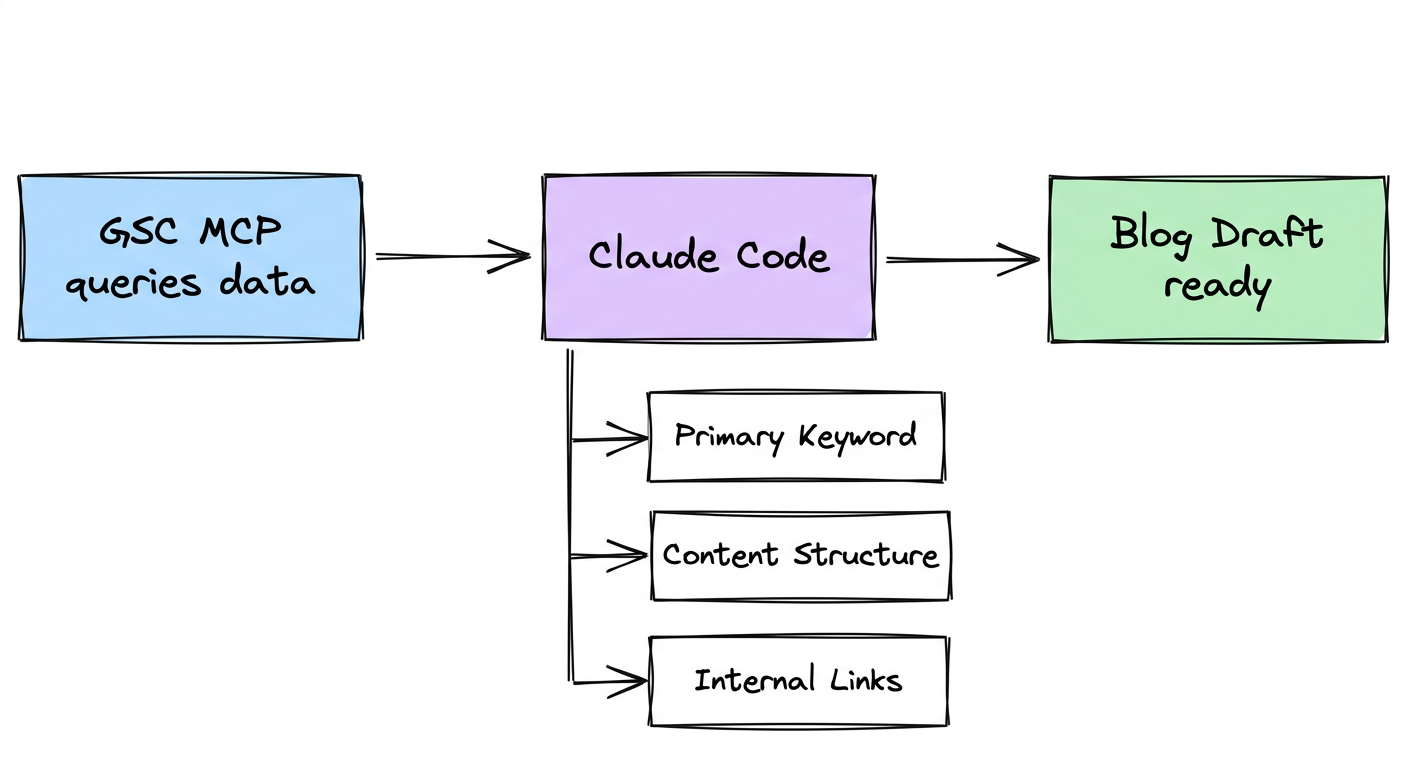

Part 3: How Claude Assembles the Brief and Draft

This is where it comes together.

With GSC data and DataForSEO output both available in the same context window, Claude can write a content brief that's grounded in actual data — not assumptions.

The brief includes:

- Primary keyword + secondary keywords from the clustering output

- Target word count (based on top competitor average)

- Recommended structure (H2s and H3s that match people-also-ask boxes and competitor headings)

- SERP feature to target (featured snippet format, FAQ schema, etc.)

- Internal linking suggestions (based on existing pages from GSC that are relevant)

- The hook — what angle makes this post different from what already ranks

From the brief, Claude writes a full draft — same session, no copy-pasting. The draft follows our established style, uses the keyword naturally, and is structured to match what Google is already rewarding for that query.

The Actual Setup (If You Want to Replicate It)

Here's what the stack looks like under the hood:

GSC MCP Server — We run a local MCP server that wraps the Google Search Console API. It's configured in Claude Code's MCP settings. Once connected, Claude can call tools like get_search_analytics, inspect_url_enhanced, and check_indexing_issues directly from the conversation.

DataForSEO Skill — We have a /dataforseo skill registered in Claude Code that handles authentication, constructs API requests, and returns formatted CSV or JSON output. A typical call looks like: /dataforseo keyword_data --keyword "ai content automation" --country IN.

Blog Draft Format — Every draft outputs in a consistent Markdown format — title, meta description, target keyword, CTA, and section headers — matching the conventions in our blog-drafts/ folder. This makes it easy to review and publish without reformatting.

The Workflow in One Sentence — Claude reads GSC → identifies opportunity keywords → validates with DataForSEO → writes a structured draft → drops it in the drafts folder → we review and schedule.

What This Actually Saves

Before this workflow, a single blog post involved:

- 30-60 minutes of keyword research

- 20-30 minutes building a content brief

- 1-2 hours writing a first draft

- Multiple tool switches and tab hell

Now the research and brief happen in a single conversation that takes under 5 minutes. The draft is ready for review in another 5-10 minutes. We spend our time editing and adding the specific client stories and context that AI can't generate on its own.

The output isn't "AI content" in the generic sense. It's content built on real data, in our voice, targeting keywords with a documented reason to exist.

The Part That Still Requires Humans

We're not going to pretend this is fully automated.

Claude doesn't know which case studies to reference. It can't pull from a conversation we had with a client last week. It doesn't know that a specific angle already flopped six months ago when we tried it.

That's what the edit pass is for. The draft gives us a skeleton that's 80% of the way there. We add the specifics — real numbers, real situations, real opinions — that make a post actually useful to someone rather than just technically correct.

That ratio is the whole point. We're not replacing the thinking. We're removing the parts that didn't require thinking in the first place.

Who This Works For

This setup makes the most sense if:

- You have an existing site with Search Console data (at least a few months of impressions)

- You publish blog content regularly (or want to, but keep getting blocked by the research overhead)

- You're using Claude Code and can add MCP servers and skills

It works less well if you're starting a brand-new domain with no GSC data, or if your content strategy is purely brand-driven rather than search-driven.

What's Next

We're working on closing the loop — connecting publishing directly, so approved drafts can be staged as pull requests or pushed to a CMS without leaving Claude Code. The goal is a workflow where a content decision in the morning becomes a published post by the afternoon, with the human only in the loop for the parts that actually need judgment.

If you want to see how something like this could work for your business, book a free AI audit. We'll look at your current content workflow, where the friction is, and what a data-driven automation setup would actually look like for you.